Notes from Code with Claude 2026

I attended Anthropic's Code with Claude developer conference in San Francisco on May 6th. Here's what I learned about context management, the shifting bottlenecks in software engineering, and what it looks like to run an AI-native engineering org.

When I saw that Anthropic was hosting its second Code with Claude developer conference and opening a ticket lottery, I applied immediately. I was surprised to get a ticket. I know several colleagues and friends who weren't able to get a spot. In fact, interest was so high that Anthropic added a second day specifically for independent developers and early-stage founders who couldn't attend day one. Attending in person was a great opportunity to network with and learn from the people building Claude and the teams shipping with it.

This was an informative and thought-provoking conference. I came away with a better understanding of emerging trends, a clearer sense of what other builders are working on, and a sharper view than I expected of how the role of a software engineer is changing.

In this post, I'll recap the conference and major announcements, share the themes I noticed across sessions and conversations, and close with what I'm taking back to my own work.

About the Code with Claude Conference

Anthropic's Code with Claude Developer Conference is geared toward software developers, product managers, and technical leaders who are actively building with AI. The 2026 conference kicked off in San Francisco on May 6th, then travels to London on May 19th before closing in Tokyo on June 10th. The conference offers a mix of keynotes, workshops, presentations, and 1:1 sessions. Most talks were given by Anthropic employees, with a handful of partnership sessions from representatives from GitHub, Amazon, Datadog, Vercel, Cursor, and Microsoft.

The San Francisco conference I attended was hosted at SVN West. The venue had three levels and drew a few thousand attendees. I saw a good mix of attendees from startups, mid-sized companies, enterprise organizations, and consulting firms. Most were from the Bay Area, but I also ran into people like myself who flew out for it. Everything was well organized: check-in, security, and the sessions themselves all ran smoothly. The food was better than most conferences I've attended. My only complaint is that the Wi-Fi was a bit spotty during the workshops, which made it difficult to follow along at times.

One thing that stood out was the number of women in technical and leadership roles at Anthropic, which is notable in a tech industry that is often male-dominated. The keynote opened with Chief Product Officer Ami Vora, followed by Dianne Na Penn, Head of Product for Research, along with Katelyn Lesse, Head of Platform Engineering, Angela Jiang, Head of Claude Platform Product, and Cat Wu, Head of Product for Claude Code. Nearly all of the keynote presenters were women. Boris Cherny, Head of Claude Code and the creator of the product, closed the keynote.

It was also interesting that the keynote was led by product heads and engineers rather than CEO Dario Amodei. I see this as a signal about what kind of company Anthropic aspires to be, and that AI-native companies have a much stronger product focus.

Announcements

Anthropic didn't announce a ton of new product launches or a new model. Access to Mythos remains limited to a few organizations. Instead, the focus was on improving what already exists, a theme in many sessions.

The most significant announcement was Anthropic's compute deal with SpaceX, which gives users higher subscription usage limits and higher API rate limits. Anthropic has been known to be compute constrained for a while, so any additional capacity should help. This follows an announcement of a compute deal with Amazon Web Services, which also provides Anthropic with additional capacity. Dario Amodei shared that demand has grown a startling 80x so far this year, underscoring the importance of these deals for Anthropic's continued growth. There's been ongoing speculation that Opus 4.7 was quietly “nerfed” due to compute pressure, so it'll be interesting to see whether this partnership changes that.

Anthropic is rapidly developing Claude Managed Agents, which entered public beta on April 8th, 2026. Three new features were announced during the keynote: Multiagent Orchestration, which lets you scale a fleet of agents to break down complex tasks; Outcomes, which lets you define success criteria so agents can iterate and improve over time; and Dreaming, which allows Claude to recall previous sessions and build on past work. You can learn more about these new capabilities here.

During the keynote, Dianne Penn hinted that Anthropic is working on "context windows that feel infinite." The comment generated some chatter online, with people interpreting it as a signal that near-infinite context is imminent. Sessions later in the day told a different story. Context window limits have stayed constrained to roughly 1 million tokens for over a year across AI providers. We're not seeing exponential growth in context window size, and several engineering-led sessions reflected that reality rather than the keynote's more aspirational messaging.

I did not recall any mention of Claude Security, which entered public beta for Claude Enterprise customers on April 30th. Given that AI is both accelerating vulnerability discovery and playing an increasingly critical role in defense, the lack of coverage surprised me.

Themes

Across the day's sessions and the conversations between them, a handful of ideas kept surfacing. I’ll share the major ones that stuck with me below.

The context window is still a box

Like humans, models have limited short-term memory. Context windows are still constrained and likely to stay that way for the foreseeable future. Daisy Hollman, a Member of Technical Staff at Anthropic, led a workshop titled "Beyond the Basics with Claude Code" and spent a significant portion of it making exactly this point. While other model capabilities have scaled remarkably, context windows have remained roughly the same for over a year. She doesn't see that changing anytime soon, and she argued that one of the harder engineering challenges in building agents is choosing the right information to put into a fixed box. Engineers are investing real time finding ways to use that box more effectively.

Stuffing your CLAUDE.md with every standard, convention, and acronym your team uses may feel like a good idea, but you pay for all of it on every turn. That can result in worse, more expensive model performance, not better.

Claude Code has a few main plugin abstractions: CLAUDE.md, MCP, skills, hooks, and subagents. MCP is the universal standard, but full tool descriptions can eat valuable context, even with recent improvements that more lazily load definitions. Twenty MCP servers, each exposing fifteen tools, means your prompt is mostly tool definitions before Claude reads a single line of your code or task. Daisy noted that if you already have a CLI that does the job, it's often more efficient to let Claude shell out to it than to wrap that capability in an MCP server. The MCP protocol was designed at a time when it wasn't a given that Claude would have access to a file system or computer use, which explains some of the protocol's design choices. Skills and subagents can also help manage context more effectively than MCP servers.

Hooks are worth calling out separately. Hooks are the only abstraction that don't consume context until they fire. Daisy encouraged using hooks to guide Claude during its work, describing them as "red squigglies for agents", small corrections injected at the moment of a mistake rather than caught later in review. Hooks aren’t a solution to every problem, but they can have a dramatic impact on context efficiency by selectively adding content to the box only when it's needed.

Brad Abrams, who leads product on the Claude Platform, put numbers to the same idea later in the day. His recommendation: aim for at least an 80% prompt cache hit rate in production agents before you do anything else. Cursor, Replit, Perplexity, and Claude Code itself all run their caching rates in the 90s. If your production agents aren't hitting similar numbers, that's where to start. Cached tokens are cheaper, faster, and don't count against your rate limits. Brad laid out a few simple rules: keep unused tool schemas out of context, keep raw tool output out of context, and use programmatic tool calling so the model can write code to inspect a tool result and pull only what it needs.

The shape of data matters too, not just the volume. In his session "Build a Proactive Agent Workflow with Claude Code," Noah Zweben shared an example of a sports company that reduced token usage by 66% simply by switching tool output from JSON to markdown, removing unneeded fields, and removing timestamps from tool output. These changes not only reduced cost but also improved Claude’s results.

While Dianne shared an aspirational vision of “context windows that feel infinite” in her keynote, the rest of the day told a different story. The box is not getting bigger anytime soon. Managing context effectively will remain one of the most important skills for anyone building with AI.

The bottleneck has moved

Several speakers came at this from different angles, but there was a common theme: writing code is no longer the slow part. Verifying it, reviewing it, coordinating around it, and figuring out what to build in the first place are now the slow parts. Fiona Fung put it most directly in her session on running an AI-native engineering org: "the bottleneck moved from coding to everything around coding." The old constraint was bandwidth, the time it took to write the code, write the tests, and do the refactor. That constraint is gone, or going. The new constraints are review capacity, verification, cross-functional coordination, and security. While these constraints always existed, they’re now the primary bottlenecks.

Noah Zweben's session put numbers on it. He showed a chart of his own team's weekly PRs merged to main. In three months, weekly PR throughput went up 300%, from around 500 in January to roughly 1,150 in March. The problem this caused was that his team only has a single engineer dedicated to documentation. To avoid documentation becoming a bottleneck, they had to rethink how they could be more efficient by using Claude and agentic workflow routines to keep documentation current asynchronously overnight.

Boris Cherny's live coding session with Jarred Sumner made the same point in a different way. Jarred showed off Robobun, an autonomous Claude Code agent he built that works on the Bun codebase. Robobun has now made more commits to Bun than Jarred himself. Jarred was the creator and main maintainer of this project, but with Robobun, more PRs are being closed, and new features are being added much more quickly. Jarred also described a workflow in which he has CodeRabbit and Claude Code review the same PR and effectively debate it. CodeRabbit flags style and convention issues and Claude reasons about code chains and downstream effects. He lets the agents work on the PR in the background and reviews the final code output after several iterations.

The pattern across these examples is the same: the shape of engineering work is shifting from writing code and reviewing it, to specifying the work, dispatching one or more agents, and reviewing and evaluating what comes back. Daisy Hollman illustrated this well by describing her own work supervising fleets of asynchronous agents and telling attendees, 'You should be running agents overnight.' Six months ago, that would have sounded like a stretch, but it's possible now, thanks to models like Opus 4.7, which can run autonomously for several hours in auto mode.

Build for the next model

All of the speakers from Anthropic were clearly convinced that models will continue to improve exponentially for the foreseeable future. Every session I attended pushed the same idea: things that didn't work months ago work now, and attendees were encouraged to keep retrying use cases that had previously failed whenever a new model ships. I heard the term "riding the exponential curve" several times throughout the conference. I'm personally not fully convinced that models can continue to improve exponentially, and I suspect there's some marketing behind that messaging, but it was clear that the Anthropic speakers genuinely believe it.

Matt Bleifer's talk, "The Thinking Lever", illustrated this with a side-by-side demo. He gave the same prompt to several different models at different effort levels: build a traffic simulation for a one-way street with a stoplight. Watching the outputs side by side made it clear how fast models are improving. More advanced models produced dramatically better simulations in terms of graphics and complexity.

Dario Amodei suggested models have much further to go, and that the focus is starting to shift from individual productivity to team and organizational productivity. He sees future models capable of performing tasks on behalf of entire teams, business units, and even entire organizations. Software engineers have been the early adopters partly because code is unusually verifiable. Dario shared that Anthropic is investing heavily in research that would let models verify and improve their own work in less verifiable domains, opening up far more than just software.

While exponential improvements may not continue, it's worth pushing the boundaries of what's possible until we see a clear plateau. It’s worth maintaining a strong set of evals so you can actually tell when a new model helps you solve a problem you couldn't solve before.

AI engineering teams look different

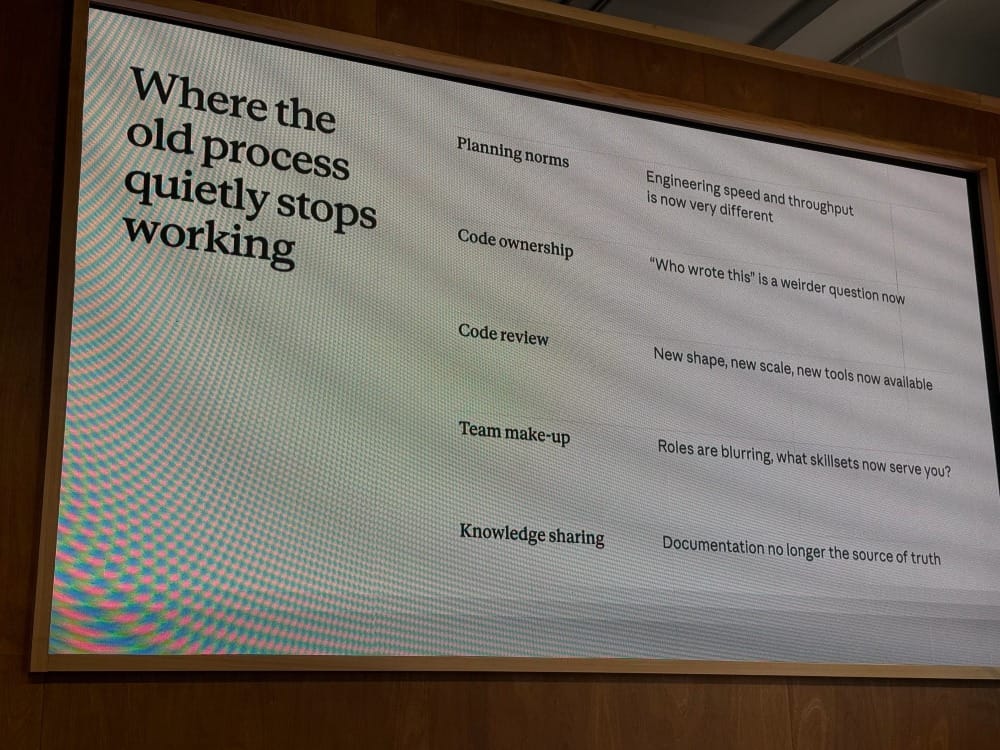

Fiona Fung's session on running an AI-native engineering org made the same point at the organization level that Daisy was making at the technical level. Code is no longer the bottleneck. The processes around engineers and team structure need to change. Most teams haven’t caught up.

Anthropic has reinvented several of its processes. For example, code review is shaped around human judgment on what actually needs deep review, not blanket coverage. Onboarding looks different now that the cost of asking a "dumb" question has gone to zero. Planning relies on prototyping rather than upfront design. "In technical debates, code wins," Fiona said. In situations where teammates disagree on a design or direction, the fastest way to settle it is to have Claude prototype both options and review the actual artifact. Building things is much less expensive than arguing or engaging in lengthy planning. The "design doc before any code" ritual is largely gone at Anthropic. Verification, on the other hand, has been doubled down on. Shifting verification left is the only way to maintain quality as throughput increases. Fiona admitted that even Anthropic has more work to do as it doubles down on verification.

Every engineer at Anthropic uses Claude Code, and Fiona encourages employees to "Claudify everything you can." Additionally, AI-native teams have explicit permission to kill old processes. Then there's room to adapt: how Claude shows up in a team's triage, what their planning rituals look like, which workflows get Claudified first. The forcing function makes adoption non-negotiable. The room to adapt makes adoption sustainable.

Summary

The conference made one thing crystal clear to me: my role as a software engineer is evolving into a more product-focused role managing a fleet of asynchronous agents. The most compelling thread running through everything, from Fiona's talk on rethinking business processes to the live demos on the floor, was that the question is no longer whether to integrate AI deeply into how you build, but how deliberately you do it. Forcing functions matter. Evals matter. And the engineers who thrive will be the ones who treat agents less like autocomplete and more like a team they're managing. Even though less time will be spent directly coding, I still see plenty of work for software engineers because producing useful software remains difficult and requires ongoing maintenance, iteration, and glue work.

Takeaways

Here are some personal takeaways I have from the conference and things I want to explore further:

- Spend more time on evaluations

- I haven't been investing enough in evaluations for the agentic workflows I'm helping ship at work as I should. That needs to change, and it's one of the most concrete action items I'm leaving with. There were good suggestions covered in workshops that I can incorporate to improve in that area.

- Explore Claude's managed agents offering

- There's significant product investment and innovation happening here. Concepts like dreaming and multi-agent orchestration should make it easier to build more capable agents while worrying less about infrastructure details.

- Use routines more for repetitive tasks

- Whether through claude.ai or the

/schedulecommand in Claude Code, I'm not using this nearly enough. Status checks, documentation drift, CVE patches, and dependency updates are exactly the kinds of recurring tasks that should run on a schedule, not sit on my mental to-do list.

- Whether through claude.ai or the

- Turn on flicker-free mode in Claude Code

- Boris mentioned there's an experimental flicker-free mode that will likely become the default in the near future. It improves performance, scrolling, and rendering while also reducing memory consumption.

- Reevaluate business processes with an agentic lens

- Fiona's talk was a useful reminder that it's not enough to Claudify individual tasks. The higher-leverage question is whether the underlying business process still makes sense at all in an agentic world.

- Most teams are still organized around assumptions that predate this moment. Planning, sprint sizing, code ownership, on-call rotations etc. were designed for a world where humans do the building. As agents take on more of the implementation work, the structures around them will need to be rethought, too.

- Use hooks more

- My use of hooks has been limited, but following Daisy's emphasis that hooks are the only abstraction that don't consume context until they fire, there are certainly areas where I can use them more to manage context better in the future.

- Experiment with long-running agent harnesses

- I saw a demo booth where a harness was building an Asana/Jira-like product autonomously across full sprints, with verification and deployment happening with minimal human intervention. The approach uses a multi-agent planner/generator/evaluator architecture designed to address the context and self-evaluation failure modes that cause naive implementations to go off the rails on complex tasks. The Anthropic engineering write-up is required reading. I need to build something real using this approach. This is the direction software engineering is moving in, and the best way to gain experience is to set it up yourself.

- The role is changing, and that's exciting

- Software engineering isn't disappearing, but for many, it's just operating at a different level and likely more product-focused. We can potentially build more interesting things and have a greater impact than ever before.

Recordings of the sessions will be released on Anthropic's YouTube channel in the coming weeks. Once these are released, these sessions are well worth watching for anyone building with AI. I left San Francisco with a clearer picture of where this is going, which is what a good conference should do.